|

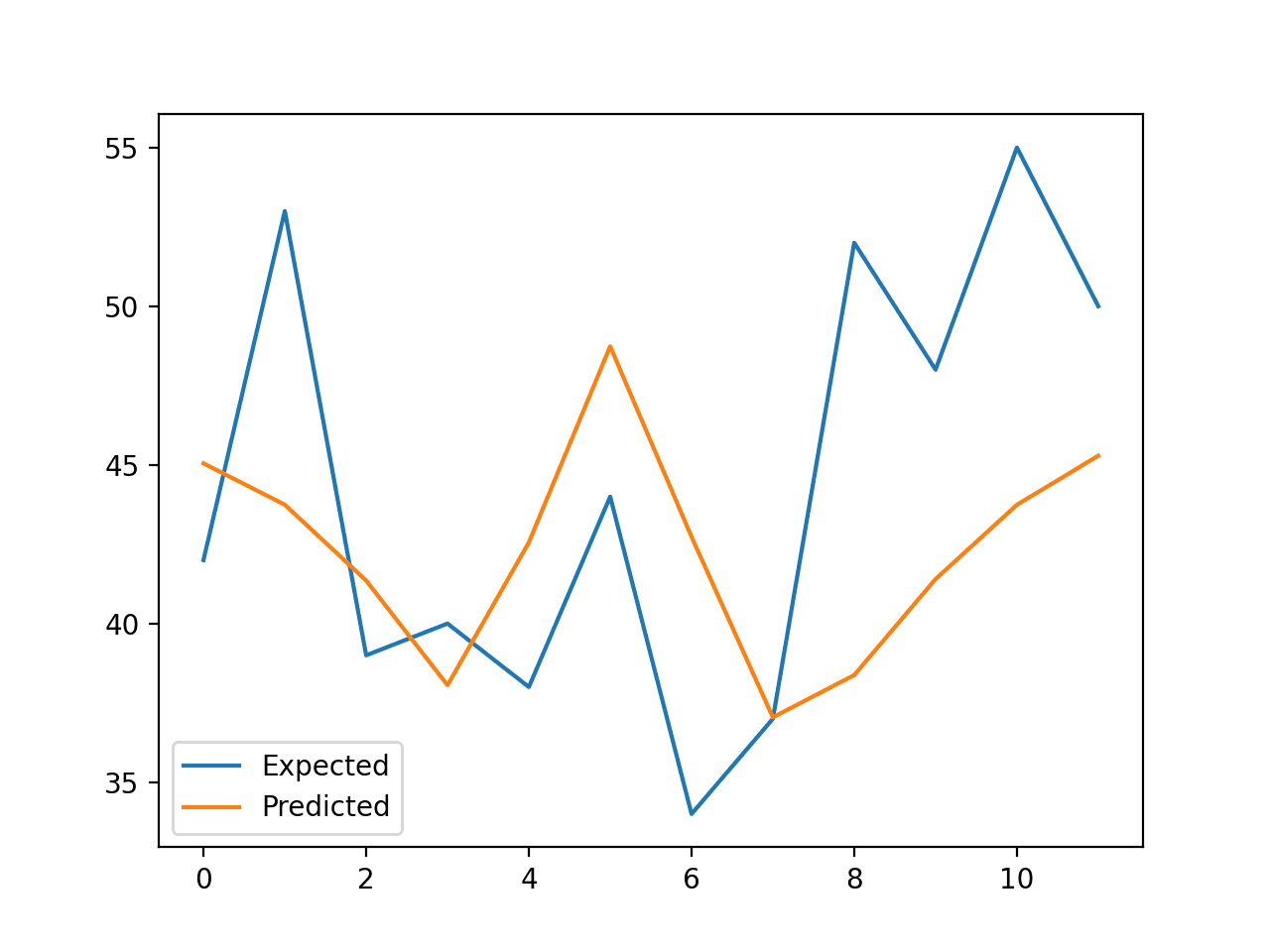

12/15/2023 0 Comments Random forest prediction

XGBoost follows a level-wise strategy, scanning across gradient values and using these partial sums to evaluate the quality of splits at every possible split in the training set. With XGBoost, trees are built in parallel instead of sequentially. XGBoost (eXtreme Gradient Boosting) is a leading, scalable, distributed variation of GBDT. Random forest bagging minimizes the variance and overfitting, while GBDT boosting reduces the bias and underfitting. The final prediction is a weighted sum of all the tree predictions. GBDT uses a technique called boosting to iteratively train an ensemble of shallow decision trees, with each iteration using the residual error of the previous model to fit the next model. The difference is how they’re built and combined. Both random forest and GBDT build a model consisting of multiple decision trees. Gradient-boosting decision trees (GBDTs) are a decision tree ensemble learning algorithm similar to random forest for classification and regression. With a large number of trees, Random forests are slower than XGBoost.Random forests outperform decision trees, but their accuracy is lower than gradient-boosted tree ensembles such as XGBoost.There are also a couple of disadvantages: Its algorithms can be used to identify the most important features from the training data set.The model can handle very large data sets with thousands of input variables, making it a good tool for dimensionality reduction.The algorithm makes model overfitting nearly impossible because of the “majority rules” output.It handles missing values and maintains high accuracy, even when large amounts of data are missing thanks to bagging and replacement sampling.

The output variable in a classification problem is usually a single answer, such as whether a house is likely to sell above or below the asking price. The output variable in regression is a sequence of numbers, such as the price of houses in a neighborhood. It’s well-suited for both regression and classification problems.There are four principal advantages to the random forest model: While random forest models can run slowly when many features are considered, even small models working with a limited number of features can produce very good results. Even though some trees are wrong, others will be right, so the group of trees collectively moves in the correct direction. This voting process protects the individual trees from each other by limiting errors. The results are of higher quality because they reflect decisions reached by the majority of trees. With a random forest model, the chance of making correct predictions increases with the number of uncorrelated trees in our model. The presence of a large number of trees also reduces the problem of overfitting, which occurs when a model incorporates too much “noise” in the training data and makes poor decisions as a result. Randomness ensures that individual trees have low correlations with each other, which reduces the risk of bias. Whereas decision trees are based upon a fixed set of features, and often overfit, randomness is critical to the success of the forest. Random forest uses a technique called “bagging” to build full decision trees in parallel from random bootstrap samples of the data set and features. In an algorithmic context, the machine continually searches for which feature allows the observations in a set to be split in such a way that the resulting groups are as different from each other as possible and the members of each distinct subgroup are as similar to each other as possible. In the example below, to predict a person's income, a decision looks at variables (features) such as whether the person has a job (yes or no) and whether the person owns a house. Decision trees arrive at an answer by asking a series of true/false questions about elements in a data set. Random forest is an ensemble of decision trees, a problem-solving metaphor that’s familiar to nearly everyone. They’re based on the concept that a group of people with limited knowledge about a problem domain can collectively arrive at a better solution than a single person with greater knowledge. Random forest is a popular ensemble learning method for classification and regression.Įnsemble learning methods combine multiple machine learning (ML) algorithms to obtain a better model-the wisdom of crowds applied to data science.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed